Paradigms of Architectural Knowledge

// What to do When Knowledge Becomes Data

By Zachary Tate Porter

Architects are, by definition, futurists. Entrusted with the fate of our built environments, they are routinely asked to look beyond the incompleteness of the present in order to invent new spatial realities. Given this responsibility, it is not surprising that architects have developed such a keen interest in technological innovation. After all, technology is often the most conspicuous marker of a rupture between past and future. Consider, for instance, Le Corbusier's fascination with the automobile, or Ludwig Mies van der Rohe’s preoccupation with the steel I-beam: two technological artifacts that simultaneously served as both generative forces and symbolic emblems for modernity as we know it today (see image 1). Yet, it is important to remember that technology alone does not determine the future as if it were some unstoppable, autonomous force. Instead, technology is merely one factor within an expansive matrix that enables many possible futures. And if it is technology’s tangible nature that has allowed architects to associate it so closely with various futurisms, then perhaps it is worthwhile to consider a less tangible entity that also plays a central role in the advancement of society: knowledge.

Specialized knowledge has occupied a privileged position within Western conceptions of architectural practice since the fifteenth-century “invention” of the architect-as-intellectual.(1) Like technology, architectural knowledge is neither static nor absolute, but changes over time in ways that can be either incremental or transformative. Around the turn of the twentieth century, for instance, American architectural practice underwent a major transformation as the knowledge paradigm shifted from embodied to codified forms of expertise. Such a shift, which was accompanied by rapid technological innovations in building materials and construction methods, ultimately laid the foundations for architecture’s transition from a craft to a profession. More than a century later, contemporary architects find themselves on the cusp of another fundamental shift in the knowledge paradigm, one propelled by artificial intelligence, machine learning, and other forms of advanced computation. Close examination of architectural knowledge and its historical relationship with technology provides a critical vantage point from which architects can speculate on this newest wave of technological innovation and its impending implications for future practice.

FROM EMBODIED EXPERTISE TO CODIFIED KNOWLEDGE

From 1911 to 1913, The Brickbuilder precursor to the now defunct periodical Architectural Forum—published a series of articles showcasing the office environments of several prominent New York architects.(2) In addition to the rooms that one might expect to find in an architect’s studio—the drafting room, the executive office, the reception area, and so forth—there was one recurring space that quietly reflected the modernization of practice: the library. Present in nearly every office surveyed, the library signalled a paradigm shift in architectural knowledge (see image 2). Whereas previous generations of architects had relied on embodied forms of expertise passed down from master to apprentice through the tradition of office training and mentorship, the increased complexity of modern building technologies compelled architects of the late nineteenth and early twentieth centuries to codify architectural knowledge in a much more systematic manner. As a result, knowledge was displaced from the architect’s mind and body, and ultimately rendered as discrete pieces of information.

The codification of architectural expertise was an imperative for early modern practitioners. By the turn of the twentieth century, the discipline’s knowledge base had become so complex and multifaceted that it was no longer feasible for any single architect to internalize all of its technical, theoretical, and historical components.(3) It was in this context that the library took on a renewed importance, serving as an extension of the architect’s brain. Of course, the architectural library was by no means an invention of the twentieth century, as one would undoubtedly find a robust collection of books in the studio of any respectable pre-modern practitioner. However, the types of books collected and their intended purpose made the twentieth-century library fundamentally different from its predecessors. Alongside theoretical texts and historical monographs—staples of the nineteenth-century library—modern architects filled their shelves with various handbooks, reference books, and manuals of practice, which compiled vast amounts of technical information related to all aspects of design and construction (see image 3).4 These repositories of explicit knowledge gradually replaced the discipline’s reliance on rules of thumb and other forms of tacit expertise.

Oftentimes, the library’s location within the office underscored the heterogeneity of architectural knowledge. In the office of Cass Gilbert, for instance, the library occupied a central position between the drafting room, on one side, and a corridor of executive offices, on the other. Such placement indicates that the library was a useful resource for both the vocational work of draftsmen and the intellectual preoccupations of the firm’s senior partners.5 Here, among shelves containing both historical treatises and freshly printed handbooks, several lines of architectural thought intersected and intertwined.

The paradigmatic shift from embodied expertise to codified knowledge had dramatic and far-reaching implications for twentieth-century architectural production. Most importantly, the codification of architectural knowledge catalyzed the larger project of professionalization, which was well underway in the United States during this period. Unlike the embodied expertise of a craftsman, codified knowledge could be standardized within professional handbooks and manuals of practice, disseminated through textbooks and university degree programs, and tested on professional licensure exams (see images 4-5). In essence, the entire enterprise of professionalization relied on this paradigmatic shift in architectural knowledge. Over time, these developments elevated the status of the architect within American society. Rather than artisans or builders, architects of the early twentieth century positioned themselves as learned professionals, whose authority was justified by their curation and management of an expansive, heterogeneous knowledge base.

So, what does this historical backstory have to do with our contemporary situation? For starters, it illustrates the ways in which technological innovation shapes the relationship between architects and their expertise. And with artificial intelligence and other forms of advanced computation on the not-so-distant horizon, this historical context might also help architects prepare for another fundamental shift in the discipline’s knowledge paradigm. Just as the codification of knowledge had significant consequences for twentieth-century practice, the transformation of knowledge into computational data will also facilitate an entirely different model of architectural production in the twenty-first century.

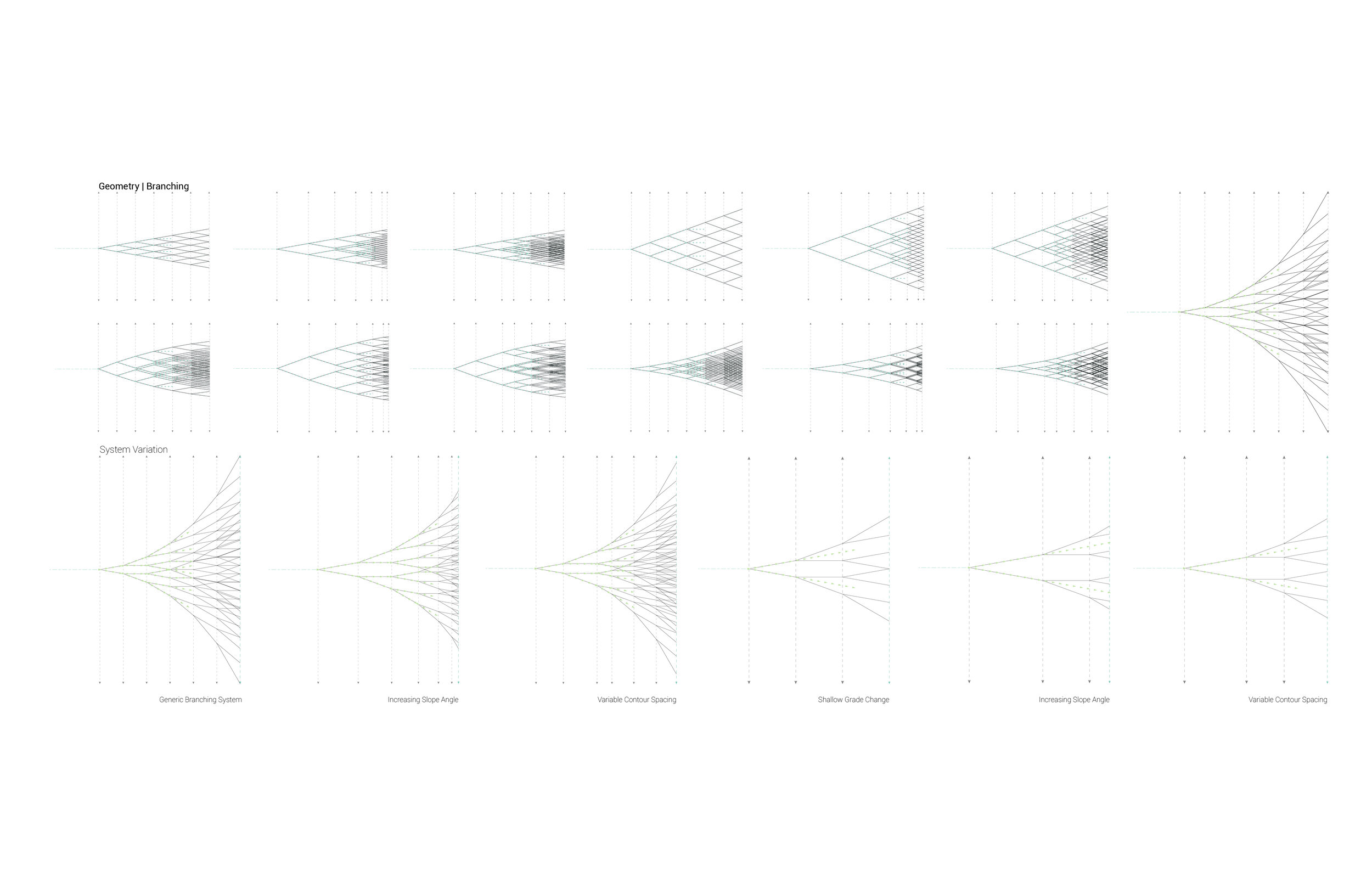

A NEW KNOWLEDGE PARADIGM: BIG DATA

Responding to the shift from embodied expertise to codified knowledge, modern practitioners developed practical strategies for filtering and querying the field’s ever-expanding databases. Under such a paradigm, it was not so critical that a professional architect be able to memorize large amounts of information so long as a specific calculation or figure could be quickly located when needed. Two decades into the twenty-first century, however, contemporary architects find themselves on the cusp of another paradigm shift in architectural knowledge. Increasingly, the task of managing and analyzing large sets of information is being performed not by human operators, but instead by digital platforms that employ artificial intelligence and neural networks, among various other forms of cognitive computing. In such a scenario, knowledge is transformed into data that can be aggregated and parsed with limited external guidance or supervision (see image 6). Despite the uncomfortable nature of this transformation, computational technologies easily outpace human capabilities by any metric of efficiency or optimization. For better or worse, architects will have to redefine their relationship to knowledge in order to remain relevant within the twenty-first century (see image 7). The first step toward such a goal is to recognize that cognitive computing platforms represent more than just another tool within the architect’s toolbox. Instead, machine learning and other applications of artificial intelligence have the potential to radically transform practice on its most fundamental levels. (6)

While contemporary architects may be tempted to lament their limited agency in the shadow of advanced computation, it is critical that the discipline maintain its sights on the future rather than the past. The artificial intelligence revolution will proceed with or without architects and, inevitably, the existing knowledge paradigm that undergirds architectural production will be replaced. With no guarantee that the profession’s legal structures for jurisdictional authority (i.e. accredited education and licensure requirements) will survive this transition, architects are at risk of losing much of the power that they have traditionally wielded within society. Amazon, Google, and Facebook, alongside several other major technology companies, have invested an unprecedented amount of resources in the development of digital platforms capable of analyzing increasingly large datasets; it is only a matter of time before they turn their attention to the built environment. To keep pace, architects must proactively redesign their knowledge infrastructure around the innovative potentials of cognitive computing.

The benefit of embracing this shift toward big data and cognitive computing is that it offers the opportunity for architects to address problems they have encountered within the existing knowledge paradigm. For instance, some architectural educators, including Thomas Fisher and Renée Cheng, have critiqued the way knowledge is handled and shared within architectural practice, especially when compared to the sciences. On the one hand, they argue scientists take pride in the construction of a singular, collective body of knowledge bolstered by the evidence-based results of controlled experiments, which are published, peer-reviewed, and reproducible. On the other hand, architects often view knowledge as proprietary; hence, the discipline’s supportive stance on intellectual property, originality, and authorship. Such a discrepancy has led Fisher and Cheng, among others, to lament the “broken knowledge loop” within architectural practice. (7) According to these critics, architecture’s reliance on intellectual property has made practice less proficient and effective by obstructing the sharing of knowledge across the profession. As artificial intelligence is integrated into architectural practice over the next decade, this model of proprietary knowledge could be completely reoriented towards a collaborative, shared approach. The radical potential of such a non-proprietary model can already be seen in many alternative currents of contemporary practice, including the Open Building Institute and WikiHouse, among others. (8) And while these alternative models may bring their own limitations and dilemmas, architects would be wise to let go of past ideals in order to better adapt to the emerging knowledge paradigm.

In addition to rethinking the sharing protocols for architectural knowledge, architects might also prepare for future practice by reflecting on the conceptual nature of data and its role within architectural production. For instance, Johanna Drucker’s distinction between data (which is passively given) and capta (which is actively taken) would be a useful starting point for such a discussion. (9) Through this distinction, Drucker emphasizes the ways in which knowledge is constructed (rather than empirically observed) within the humanities. For architects, Drucker’s argument might support new capta-collecting initiatives or lead to critiques of the existing datasets. But regardless of how these issues get parsed, architects need to be actively shaping the infrastructure for future practice, rather than waiting for cognitive computing to reach a point of technical implementation. After all, as the historical shift from tacit expertise to codified knowledge illustrates, there are significant implications embedded in the relationship between architects and their heterogeneous knowledge base.

In the early twentieth century, the codification of architectural knowledge was intertwined with various forms of standardization. With the rise of mass production, for instance, catalogues of standard products replaced building components that would have previously been customized and site-specific. At the same time, performance criteria for these products and other building materials were also standardized and listed in manuals of practice for easy reference. As digital computation and big data reset the limits and tolerances for architectural design, will standardization fall by the wayside? Or will new standards be developed? Scholars of the first digital turn, such as Mario Carpo, predicted a future of mass customization in which each building component could be unique at no added cost. (10) And while such a future is now technically possible, architects must approach these issues with an understanding of how architecture’s material manifestations are intertwined with less tangible dynamics, such as labour relations, regulatory frameworks, and, of course, knowledge paradigms.

While transformations within architectural knowledge are not nearly as conspicuous as technological innovations in construction methods or building materials, their impact on architectural practice is arguably just as profound. As already discussed above, the shift from embodied expertise to codified knowledge was critical for the professionalization of architecture in the late nineteenth and early twentieth centuries. One curious consequence of professionalization, however, was its affirmation of the nation-state as the primary framework for practice. In the United States, for instance, the American Institute of Architects relied heavily upon the American legal system to protect its members’ jurisdictional authority from unlicensed competitors. Ironically, such a dynamic was in direct contradiction to the avant-garde’s vision for a transnational discourse on architecture. With digital and cloud-based platforms facilitating an increasingly global society, some architects might wonder whether it still makes sense to ground their expertise within nationally constituted professions.

Since any shift within architecture’s knowledge paradigm recalibrates the discipline’s core values and methodologies, the integration of artificial intelligence and other forms of cognitive computing requires theoretical, historical, and ethical guidance from the profession as a whole. As the physical library gives way to the digital server, will knowledge be shared amongst architects through a centralized network, or will it remain proprietary and decentralized? Should architects abandon their existing professional infrastructures and standards for practice, or retool them for the age of artificial intelligence? How can the heterogeneity of architectural expertise be synthesized and simulated through advanced computation? Rather than technical details, these questions cut to the core of architecture’s values and responsibilities within contemporary society. And while their answers may not yet be apparent, they point to systemic disruptions that await just beyond the horizon of the present.

Endnotes

(1) Architectural historians like James Ackerman and Mario Carpo have described the Renaissance as the period in which architects distanced themselves from the construction site (through the advent of measured drawings) to emerge as intellectuals, rather than artisans or craftsmen. For more detailed accounts of this historical development, see James Ackerman, Origins, Imitations, Conventions (Cambridge, MA: MIT Press, 2002) and Mario Carpo, The Alphabet and the Algorithm (Cambridge, MA: MIT Press, 2011).

(2) See D. Everrett Waid, “How Architects Work,” The Brickbuilder 20 (1911): 249–253 and D. Everrett Waid, “How Architects Work, II,” The Brickbuilder 21 (1912): 8–10.

(3) In his 1916 summary of the previous twenty-five years of architectural practice in the United States, A.D.F. Hamlin commented on the increasing burden of technical knowledge: “The requirements laid upon the architect have enormously increased the complexity of his task, and the struggle of competition has become intense beyond the limits of a generous and enthusiastic emulation. The commercializing of large building operations has raised new and often embarrassing problems of professional ethics and practice.” A.D.F Hamlin, “Twenty-Five Years of American Architecture,” Architectural Record 40, no. 1 (July 1916): 2.

(4) Such useful reference books included Architectural Graphic Standards, Kidder’s Architects’ and Builders’ Pocket-book, Carnegie’s Pocket Companion for Engineers, Architects, and Builders, and Sweet’s Catalogue of Building Construction, among countless others.

(5) For a thorough analysis of the intra-office dynamics between American architects and draftsmen in the early twentieth century, see George B. Johnston, Drafting Culture: A Social History of Architectural Graphic Standards (Cambridge, MA: MIT Press, 2008).

(6) This “tool argument” persists throughout much of the recent literature on architecture and artificial intelligence. For instance, in article written for the AIA, Kathleen O’Donnell suggests, “Architects should see artificial intelligence as an opportunity—a tool to augment practice, replacing mundane tasks—not as a threat to their jobs.” Kathleen M. O’Donnell, “Embracing Artificial Intelligence in Architecture” last modified March 2, 2018, www.aia.org/articles/178511-embracing-artificial-intelligence-in-architecture.

Similarly, Phil Bernstein has argued for splitting the mundane, “tame” problems from the open-ended, “wicked” ones, noting that artificial intelligence is unlikely to compete with human problem solving in the near future. Phil Bernstein, “How Can Architects Adapt to the Coming Age of AI” last modified November 22, 2017, www.archpaper.com/2017/11/architects-adapt-coming-ai/.

By contrast, Daniel Susskind describes a very different vision for the future of cognitive computing. While Susskind acknowledges that artificial intelligence might initially function as a tool to optimize existing practices, he contends that, over time, “our traditional professions will be steadily dismantled.” Daniel Susskind, “The way we’ll work tomorrow” last modified March 8, 2016, www.ribaj.com/intelligence/the-future-of-architecture. Also see, Richard Susskind and Daniel Susskind, The Future of Professions: How Technology Will Transform the Work of Human Experts (Oxford: Oxford University Press, 2015).

(7) For discussion of the “broken knowledge loop,” see “Closing the Knowledge Loop: An Interview with Renée Cheng.” Interview by Emily Grandstaff-Rice, DesignIntelligence, December 11, 2015, accessed May 21, 2018, www.di.net/articles/closing-the-knowledge-loop-an-interview-with-renee-cheng/.

(8) For a more robust discussion of these issues, see Digital Property: Open-Source Architecture, eds. Wendy W. Fok and Antoine Picon (Oxford: Wiley, 2016), Carlo Ratti and Matthew Claudel, Open Source Architecture (New York: Thames & Hudson, 2015), and Paradigms in Computing: Making, Machines, and Models for Digital Agency in Architecture, eds. David Gerber and Mariana Ibañez (Los Angeles: eVolo Press, 2014).

(9) According to Drucker, “Capta is ‘taken’ actively while data is assumed to be a ‘given’ able to be recorded and observed,” in: Johanna Drucker, “Humanities Approaches to Graphical Display,” Digital Humanities Quarterly 5, no. 1 (2011), accessed May 21, 2018, digitalhumanities.org/dhq/vol/5/1/000091/000091.html.

(10) See Mario Carpo, The Alphabet and the Algorithm (Cambridge, MA: MIT Press, 2011).

Bio

Zachary Tate Porter is Assistant Professor of Architecture at the University of Nebraska-Lincoln. He holds a PhD in Architectural History from the Georgia Institute of Technology, as well as a Master of Architecture from the University of North Carolina at Charlotte. He has previously taught at SCI-Arc and the University of Southern California.